The macOS’ version of the cp Unix command won’t create links

I ran into this while working on a Keyboard Maestro macro that creates hard links: The macOS version of cp won't create links, at least not in Sonoma. In Ventura, it works even though it throws the same error as it does in Sonoma.

Copying as hard links is part of the cp feature set, fully covered in its man pages. But it doesn't work in macOS. To confirm, try this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 | $ mkdir temp $ cd temp $ touch testfile $ cp -al testfile linkfile cp: linkfile: Bad file descriptor $ ls -il total 0 90783664 -rw-r--r-- 1 robg staff 0 Oct 10 08:41 testfile $ ln testfile linkfile $ ls -il total 0 90783664 -rw-r--r-- 2 robg staff 0 Oct 10 08:41 linkfile 90783664 -rw-r--r-- 2 robg staff 0 Oct 10 08:41 testfile |

When I ran into this, I searched and discovered that someone else had run into the same issue,1Apple Developer login required but that's the only mention I could find.

I have filed this bug as FB13255408 with Apple, and I'm hopeful they fix it soon. There is a workaround, obviously: Use ln instead. This works fine for individual hard links, but using cp to quickly copy an entire folder as hard links is a nicer implementation.

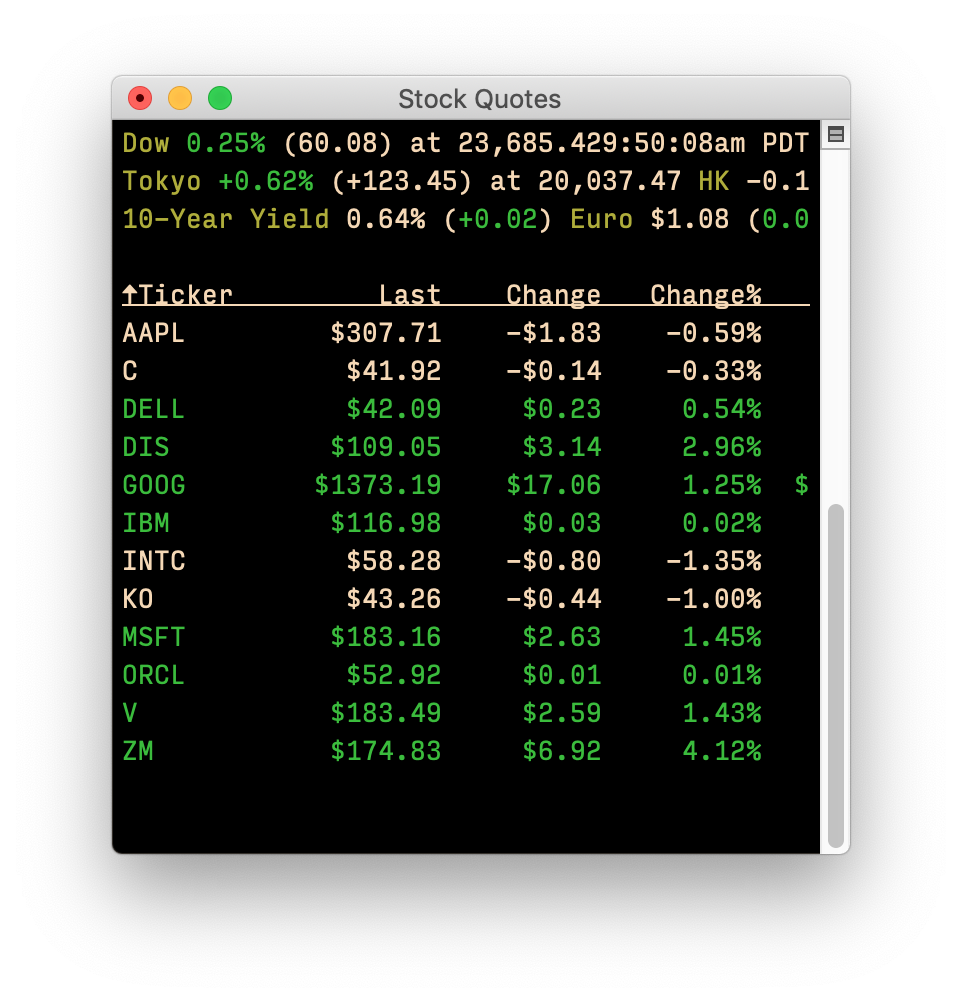

I have two displays, so I just dedicate a small corner on one of them for the Dashboard widget, which I detach from the Dashboard using an old but still functional

I have two displays, so I just dedicate a small corner on one of them for the Dashboard widget, which I detach from the Dashboard using an old but still functional