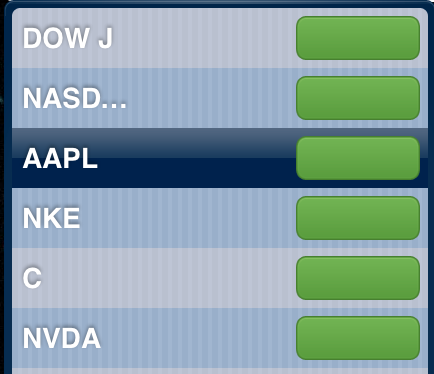

My main machine is still running Mojave, and will be for some time—our accounting app and my scanner both rely on 32-bit code. For a very long time, I've been using the built-in Stocks widget from the Dashboard (something else that's gone in 10.15) to track stocks I own or am interested in following.

I have two displays, so I just dedicate a small corner on one of them for the Dashboard widget, which I detach from the Dashboard using an old but still functional Dashboard devmode hint. The Stocks Dashboard widget is quite narrow, and not all that tall, so it didn't take a lot of space.

I have two displays, so I just dedicate a small corner on one of them for the Dashboard widget, which I detach from the Dashboard using an old but still functional Dashboard devmode hint. The Stocks Dashboard widget is quite narrow, and not all that tall, so it didn't take a lot of space.

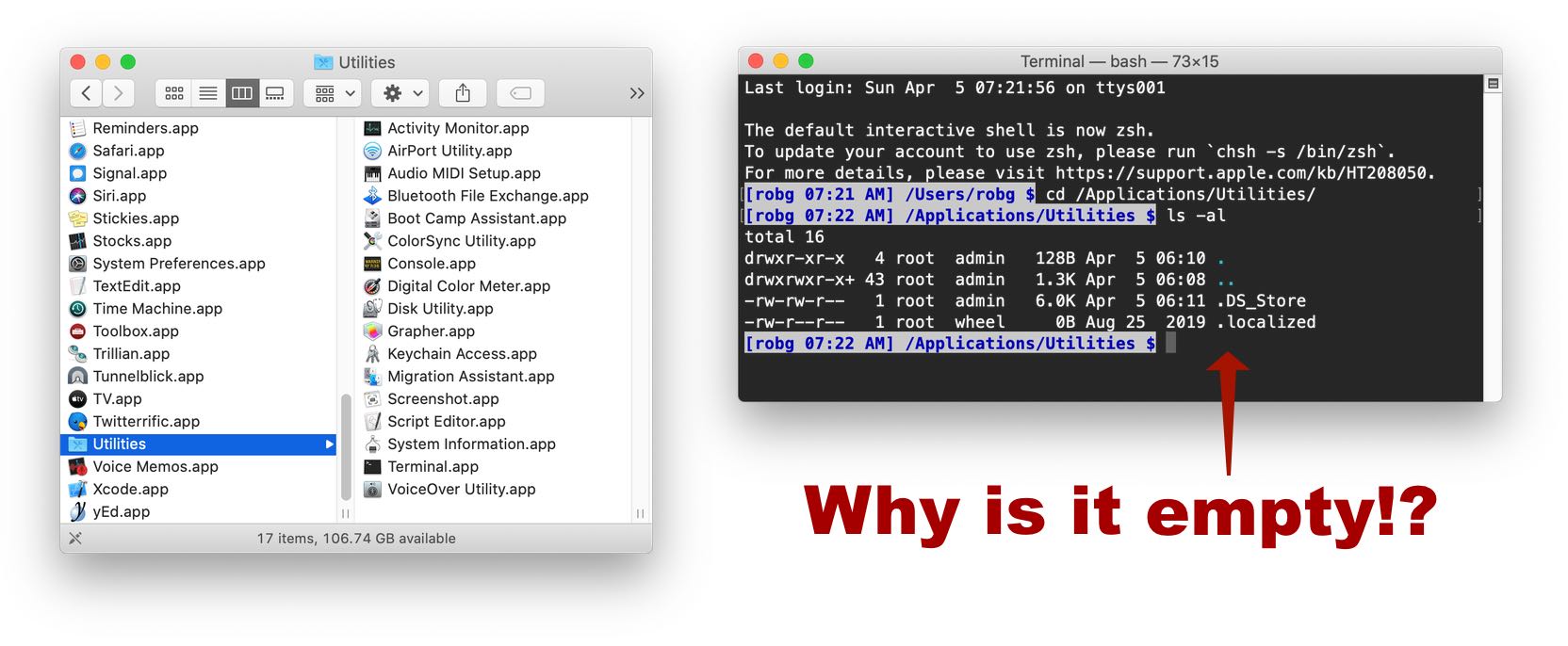

But recently, it broke, as you can see in the image at right. I set out looking for a replacement—just a simple desktop app that would open a window with stock quotes. Apple's own Stocks app doesn't meet my needs—it has a huge News area you can't close. Similarly, the Stocks section of the Today area in Notification Center requires mouse movement and action on my part to see.

I took a look at any number of third-party apps, but all of them were either full-blown stock traders/managers, lived in the menu bar or Dock, or were discontinued. I finally found what I was looking for, not in a desktop application, but in mop—an open source Go program—running in Terminal.

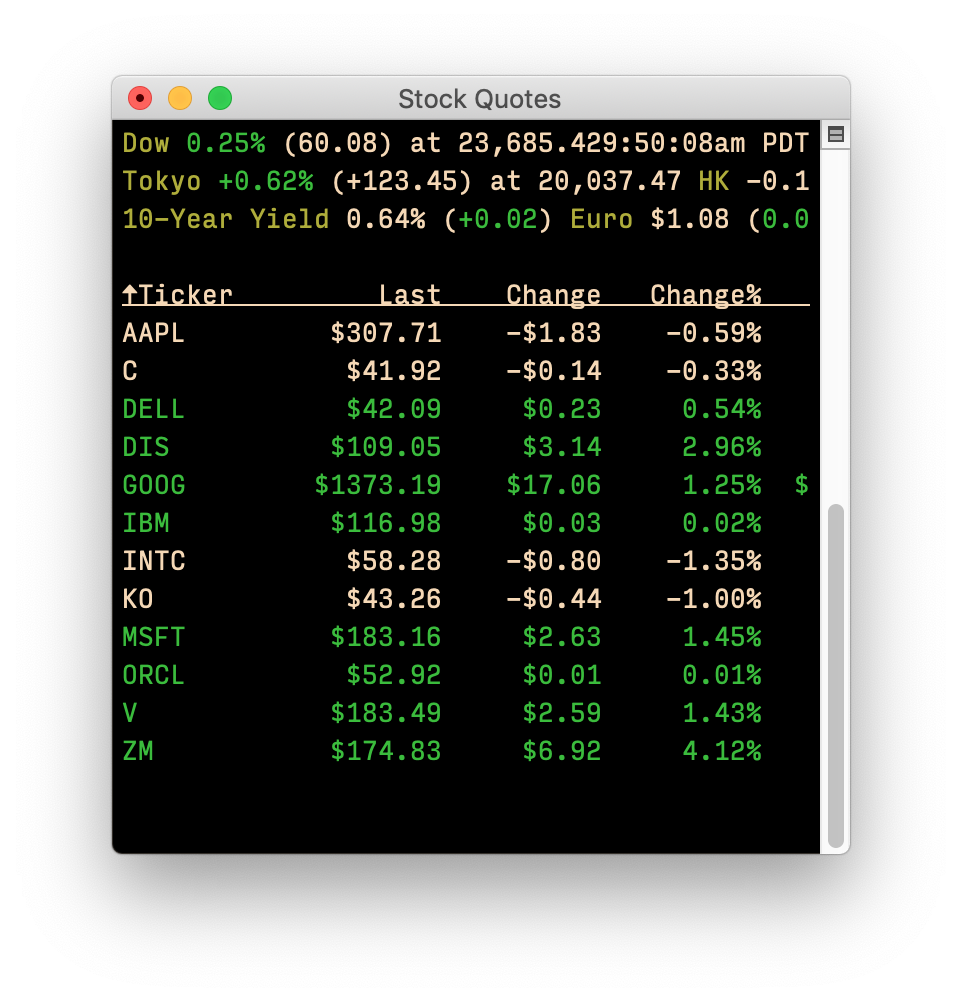

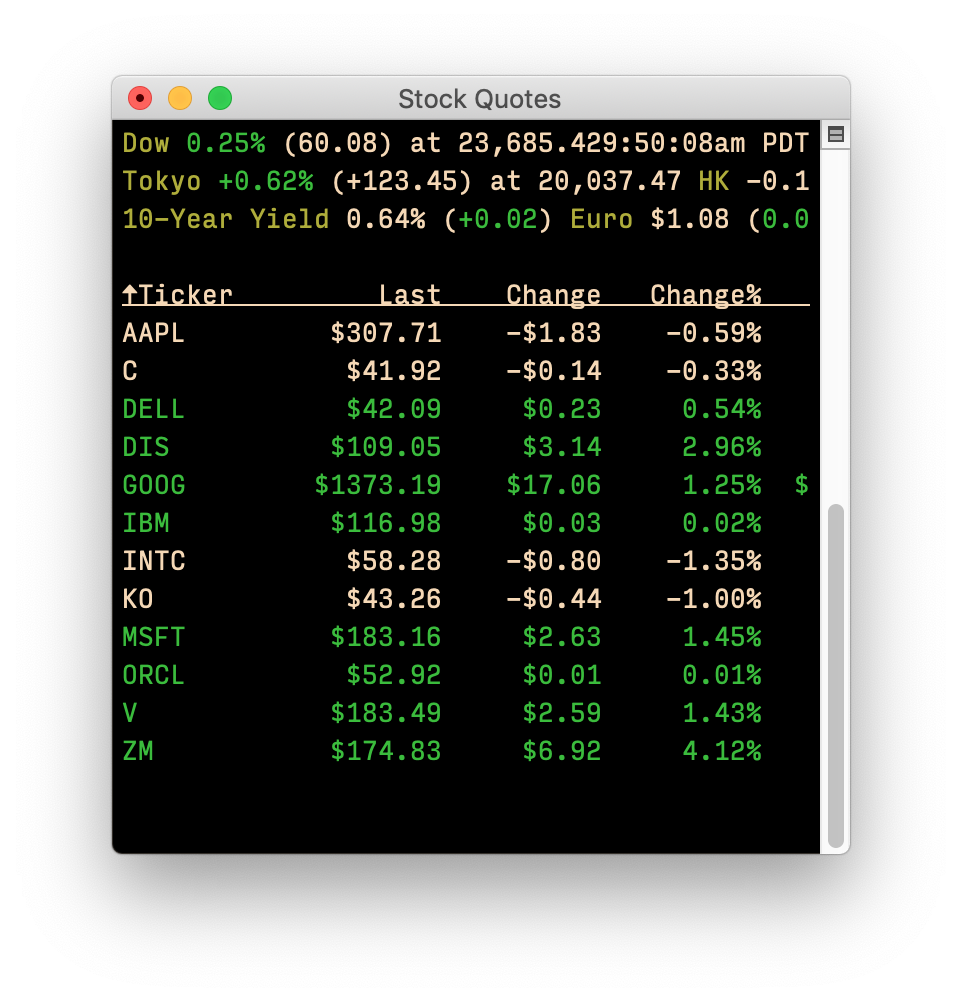

After a bit of setup work, here's what I'm now seeing1While I wish I had bought a lot of these years ago, I didn't—these are just some sample stocks on my desktop:

Yes, the window is slightly wider than my old one2It's actually incredibly wide, but I don't need to see the other columns, but it's not as tall, and I was able to find a spot for it. If you'd like to try mop yourself, setup is relatively simple.

[continue reading…]

I have two displays, so I just dedicate a small corner on one of them for the Dashboard widget, which I detach from the Dashboard using an old but still functional

I have two displays, so I just dedicate a small corner on one of them for the Dashboard widget, which I detach from the Dashboard using an old but still functional