I’ll start off with an admission: I’m a relatively clueluess user of the command line in OS X. Sure, I know my way around the basics such as ls, cp, mv, and I have a working knowledge of vi, and a basic understanding of some of the more advanced programs. But that’s about it—minimal shell scriping skills, no knowledge of regular expressions, and only the most basic understanding of pipes, redirection, combining commands, etc. So I find myself regularly amazed by the power of what (for a Unix wizard) would be an amazingly simple task.

Such was the case yesterday. Earlier in the day, I’d had a bit of a scare with our family blog site (like robservatory, it runs on WordPress). Due to a mix-up on the administrative end, the WordPress database for the site was deleted. Historically, I’ve been very paranoid about backing up the macosxhints’ sites. But for whatever, reason, that same paranoia didn’t extend to my two personal sites. Hence, I had no backup to help with the problem. Thankfully, the ISP did, and the family blog was soon back online without any loss of data. But I resolved to not let this happen again without a local backup of my own.

For the hints site, I was already using cron and ssh to automate the backups, as I described way back in 2001. So it was a simple matter to take those scripts and modify them for the robservatory and family blog sites, which I quickly did. That made me feel somewhat better, but I also wanted to back up the sites’ data files. For hints, what I had been doing was to create a tar file of all the files on the site. I then used cron to create and download this file once a week. But I knew there had to be a better way.

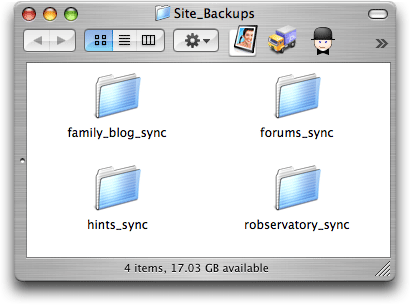

Enter rsync, a powerful tool for create a synchronized copy of files from one machine on another machine. Operation is basically automatic, it seems, and it even uses ssh for secure file transfers. After reading through the man pages, my basic needs seemed simple enough, so I set up a directory and gave it a shot…and, as with most things I do in Unix, I was then stunned when it worked as described! The initial run took quite a while, obviously—my family blog site has nearly 2gb of data on it (mostly movies of our daughter). But after that, future rsync updates were amazingly fast. I was thrilled—I now had a local copy of the exact structure of the site, kept in sync with one (relatively simple) command. Feeling confident now, I then set up rsync updates for each of my sites, leading to this wonderfully reassuring directory on my machine:

Within each of those folders is an exact duplicate of each site’s files, along with the SQL backups from my previously-created script. The last step was to simply use cron to have my SQL and rsync backup scripts run each day. Presto! Daily dumps of the SQL file, along with a freshly-udpdate synced copy of teach sites’ files.

If you’re curious, the actual scripts look something like this:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 | #! /bin/sh newtime=`date +%m%d%y_%I%M%p` ### robservatory database backup ### ### this bit is basically identical to the 2001 hint ### ssh -l username www.robservatory.com ./backsql.sh scp username@www.robservatory.com:backup_sql.tar \ /path/to/Site_Backups/robservatory_sync/sql_$newtime.tar ssh -l username www.robservatory.com rm backup_sql.sql ssh -l username www.robservatory.com rm backup_sql.tar ### robservatory site files sync ### rsync -avz username@www.robservatory.com:/var/www/html \ --exclude "weblogs/" /path/to/Site_Backups/robservatory_sync |

The first part of the script runs a shell script on the host to dump the MySQL data and then compress it via tar. Here's what that script looks like:

1 2 3 4 5 | #! /bin/bash mysqladmin -u uname -ppword flush-hosts mysqldump --add-drop-table -h localhost \ -u uname -ppword mysql_dbase > backup_sql.sql tar -czf backup_sql.tar backup_sql.sql |

The second bit is the sync step. The rsync options -avz and --exclude work like this:

- a - archive mode, which keeps symbolic links, permissions, ownerships, etc. intact.

- v - verbose output, to provide more feedback.

- z - use gzip compression to help speed file transfers.

- exclude "weblogs/" - do not back up the listed directory (it's just log files I don't really need to back up).

The final step in the equation, for this week at least, was to use the Energy Saver Preferences Panel to have my Mac wake up each evening, since I’ll be at the Expo all week. I then scheduled the cron tasks to run after the wakeup, and finally, Energy Saver puts the Mac back to sleep after a few hours, to insure all updates are done (and that I have some time to connect remotely via ssh, if I wish).

What amazed me the most is that getting this all set up, not counting the initial sync time, was amazingly fast—maybe three hours, tops, for me to go from not knowing anything about rsync to having four sites all set up and ready to go. Gads, I love OS X!

Very cool - thanks for sharing this information! One question - in your main backup script you're pulling down a tarred sql dump file. What's the command you're using to compress the sql file?

You could take advantage of bash's here-document feature to conveniently execute multiple commands in one go over the ssh connection.

http://www.gnu.org/software/bash/manual/bashref.html#SEC42

Example:

ssh -l username http://www.robservatory.com -T

umm…

My example didn't go through, it appears greater than/lower than marks are being filtered out.

Let's try it again:

Example:

ssh -l username http://www.robservatory.com -T <<-__END__

rm backup_sql.sql

rm backup_sql.tar

__END__

(You could of course combine those two rm command in other ways as well.)

This way, you could also move the contents of the remote backsql.sh script to your local script, if that seems convenient.

Rsync doesn't copy resource forks by default (even when using the -a archive flag) but adding -E to the parameters does. My normal flags are rsync -cavE to ensure decent Mac-to-Mac copying. (This applies to any extended attribute e.g. Spotlight comments.)

Rsync also traverses every file every time you sync, so the speed of sync is proportional to the number of total files in the directory/ies on the client system. (Note: the number of changes sent across the network are proportional to the changes made, but it still has to scan each file on both local and remote systems to figure out which ones have changed).

Another tool is unison, which was developed as a utility on top of rsync. However, you might not have unison on your system as it doesn't come with Mac OS X; you can instead install it via Fink or compile it yourself. The purpose of unison is to take a snapshot of all the file modification times; then, when you run it again, it knows which files have changed and just rsyncs those between the two hosts. As a result of which, if you only have a few changes in thousands of files, unison can be much quicker.

It's also possible to define rules for Unison that might otherwise have to be put into a script that allows you to sync different directories (e.g. ~/Library, ~/Documents) whilst ignoring others (~/Library/Caches).

#1 (Neil):

Sorry about that; I left out the script I run on the host. I've included it now in the body of the post.

#2 (fds):

See, I told you I didn't know much about Unix :). I have used the 'here' feature before, so I'll look into making those changes.

#4 (Alex):

I should have mentioned the resource fork thing, but it's not important to me in this context, since the files are sitting on a webserver. If/when I get around to using some local rsync stuff, though, -E will be very important :).

-rob.

Rsync, used all by itself, will overwrite old versions of files. If a local file becomes corrupted, for example, you might run rsync before discovering the problem, and now the good version that was in the backup has been overwritten by the corrupted version.

rdiff-backup is also worth looking at. It is a python script that runs on top of rsync to create incremental backups. I.e. if you run it everyday, it keeps old copies of files that have changed, so you can go back to an old version if need be.

Rdiff-backup backs up resource forks. The developmental version does this by default. You might have to set a flag to copy resource forks in the current stable version. Available via Fink.

http://www.nongnu.org/rdiff-backup/

robg:

Backups are of no use unless you are VERY confident of being able to restore from them. Part of your Backup strategy process should therefore include a restore process as a sanity check after each rsync or whatever program you're using to do backups. This may seem stupid but I've heard too many whinning about lost data because of this omission.

Barry:

In general, I completely agree, especially for local Mac backups, where you're backing up the whole system. But here, I'm backing up a series of files, mainly HTML, GIF/JPEG, etc. So a 'restore' as such doesn't make much sense.

What I do test occasionally is that the database SQL dump does import properly into MySQL again...

-rob.

Comments are closed.